Testing Google’s Search Generative Experience: Early Thoughts on what it Means for Search Marketing

Google’s AI Search product, currently being called Search Generative Experience, is now live for many users through Google Labs. This is Google’s first significant launch and integration of “AI Chat” features into the Google.com search results.

Here are a few examples, impressions and notes on SGE so far and implications for search marketers and advertisers.

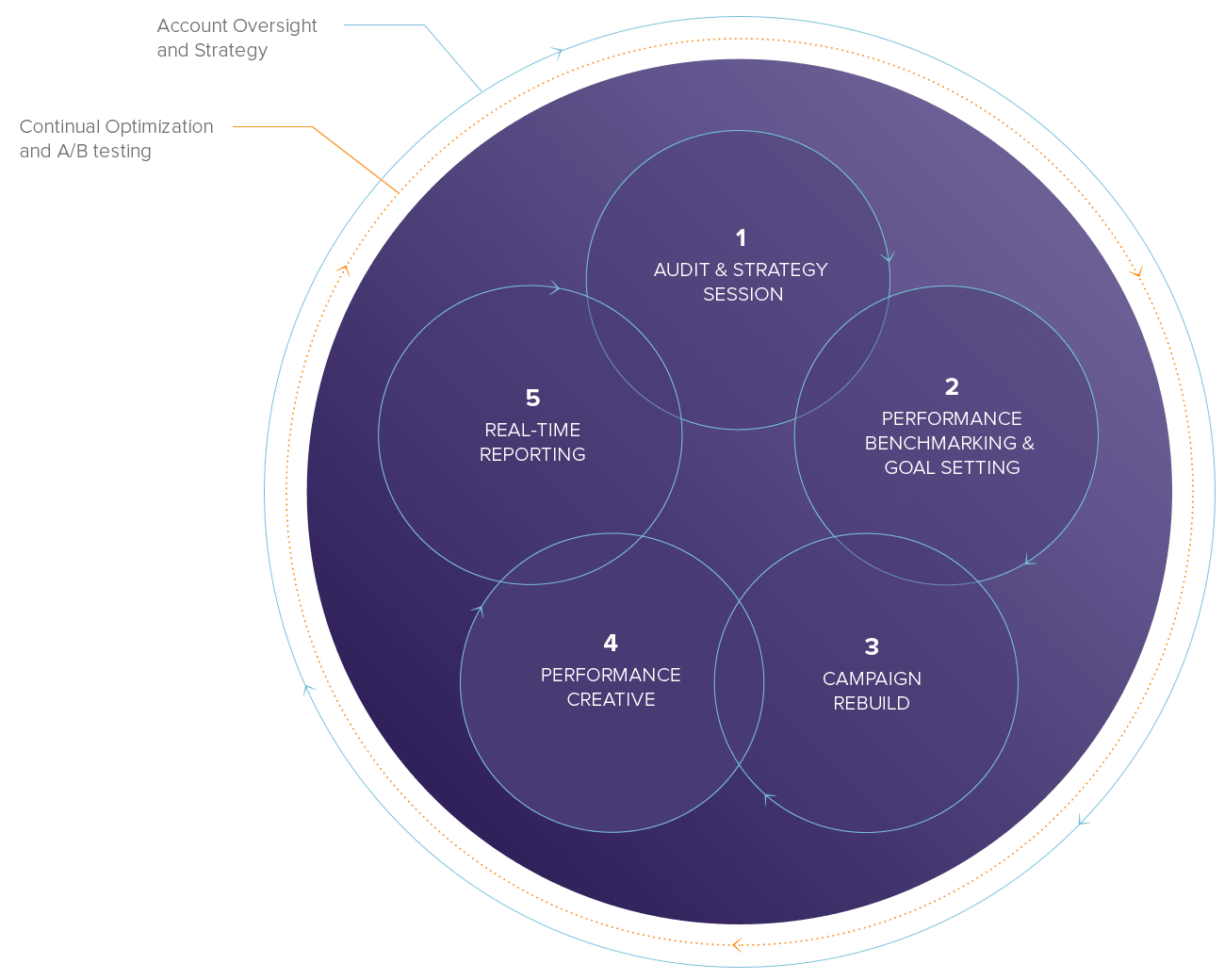

Example 1: “what is the 2023 sp500 performance”

What we think:

Even though this query is informational it is also commercially valuable – the user is interested in investing and a good candidate for a range of financial products and services. The query in our example is totally unmonetized – no ads at all. Instead Google is showing both an AI answer and a featured snippet.

The information between the AI answer and the featured snippet is highly repetitive so this is a somewhat weak user experience – the same content is repeated almost exactly. Google will have to figure out a better integration between AI content and featured snippets.

Note the 3 organic placements for CBS, S&P Global, and Financial Samurai. That’s a big win for these publishers and SEO strategies to develop these placements are going to be key for 2023 and beyond.

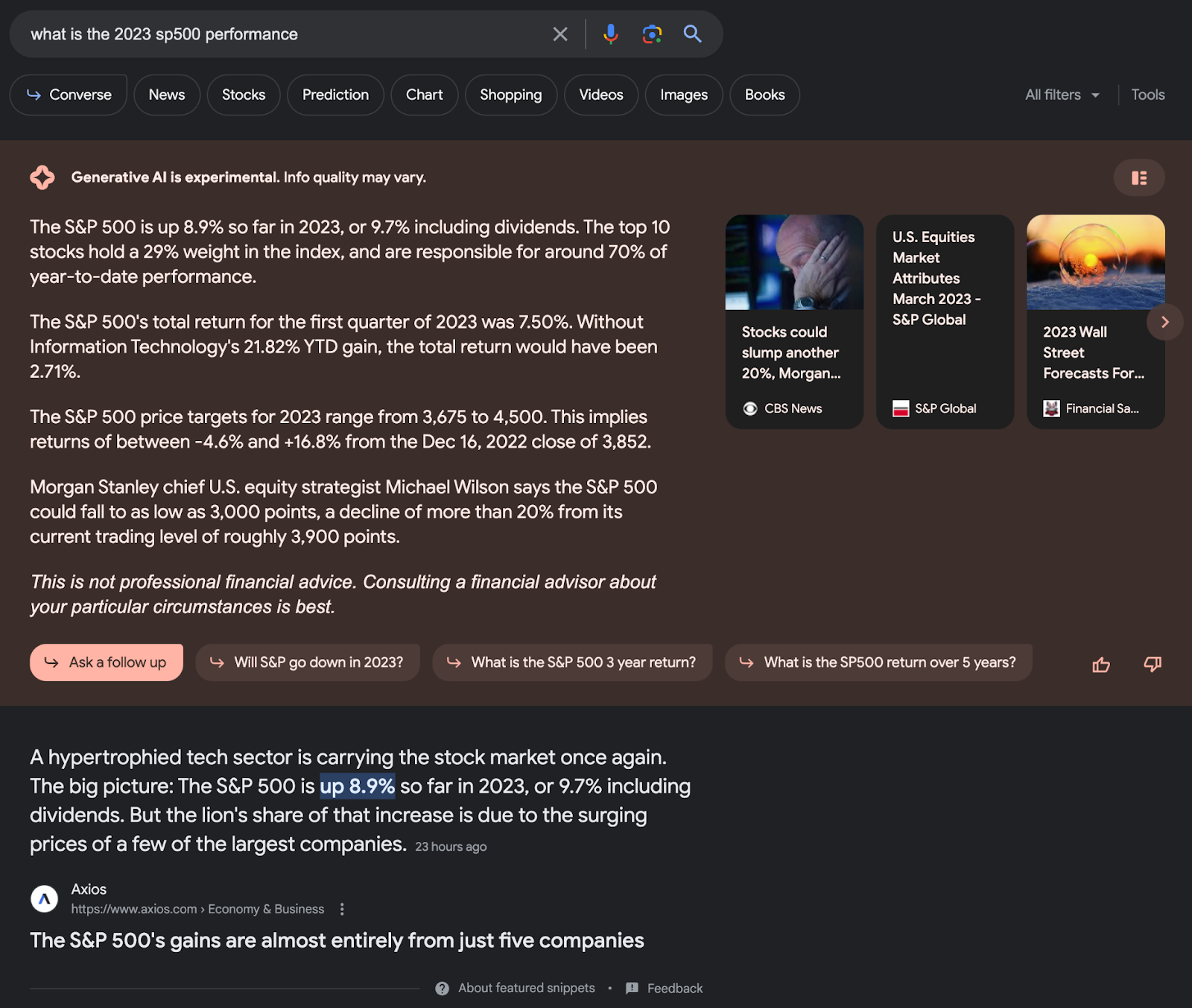

Example 2: “what is the best crm software for small business”

What we think:

In this example we see how search text ads are integrated with AI answers in Google SGE – which is basically no integration at all from the above example. The 3 top placements look like normal search ads.

Below them the AI Chat tries to answer the question – it finds 3 reasonable looking and traditionally optimized articles from Monday, G2, and Forbes. The Forbes and G2 results are also the #1 and #2 organic ranked pages.

The AI answer is really weird and bad – for some reason it is linking to an eBay listing for a software license from 2016. This is a really strange result. If you ask Google Bard the same question, the answer is much better – at least it names reasonable CRM tools – but the result is dominated by totally unnecessary images and Bard links the user out to a rather esoteric set of landing pages. SelectHub is the big winner from this result!

Google Bard Result for the same query

Example 3: “best men’s watches in 2023”

What we think

What’s interesting in the ecommerce oriented example above shows a totally different rankings and results between the regular Shopping Ads carousel and the AI answers. The AI answers also don’t closely match the free Google Shopping listings. The AI answer is querying and ranking the shopping results differently than both the regular paid and organic listings.

The AI shopping results seem pretty reasonable at first glance and look like decent answers for the query. The text answer of course is pretty bad. Somewhat like the example Bard result with repetitive and unhelpful images, Google repeats a narrow focus on the Tissot PRX – surely a fine watch but not so dominant in the category that it should occupy 70% of the answer!

We might speculate based on this that the results from chat AI are subject to being biased from a single source – here it basically seems like Google read one article about a specific watch and stopped looking for new information.